Why we pivoted to Epsilon and what we learned doing it

In April of last year, we pitched our product to over 1,500 investors. In July, we shut it down and started working on something new. Here's why.

In October ‘22, my cofounder and I started working on a developer tool that was inspired by an internal tool we had used as software engineers in Big Tech.

By November, we had made enough progress to get into YCombinator, a premier startup accelerator. In March, we demoed that product in front of over 1,500 investors.

And in July, we shut it down.

It was a scary decision but it was worth it. Here’s how it happened and what we learned.

A year ago today, we called ourselves Planar and were building a Github integration to help software engineers review code faster. 30% of software engineering time is spent doing code review and it’s going to get worse with generative AI. We wanted to build a modern code review tool to help engineers easily find issues, leave better feedback, and review changes in less time.

On the face of it, we thought we had a great idea. People told us that it resonated with them, that they had felt the pain of doing hours of code reviews, and that they were eager to incorporate it within their workflow.

We also had a story. We were democratizing an internal tool that we had used ourselves in big tech. These kinds of stories had worked in the past (e.g. PagerDuty), so there was at least some evidence that we could pull it off.

We felt great, but as months passed, we had users signing up but no revenue.

We’d ask ourselves every day, what was wrong with our business? And these were the conclusions that we came up with.

Chasing PLG led us into a procurement wall

Our product was optimized for PLG (product led growth). Any developer could download our extension, authorize access, and immediately start using it. There was no need to first book a demo, or go through a hands-on onboarding session, or sign an upfront contract. None of that. Simply download and start.

Most successful developer tools start this way and so we thought it made sense for us too.

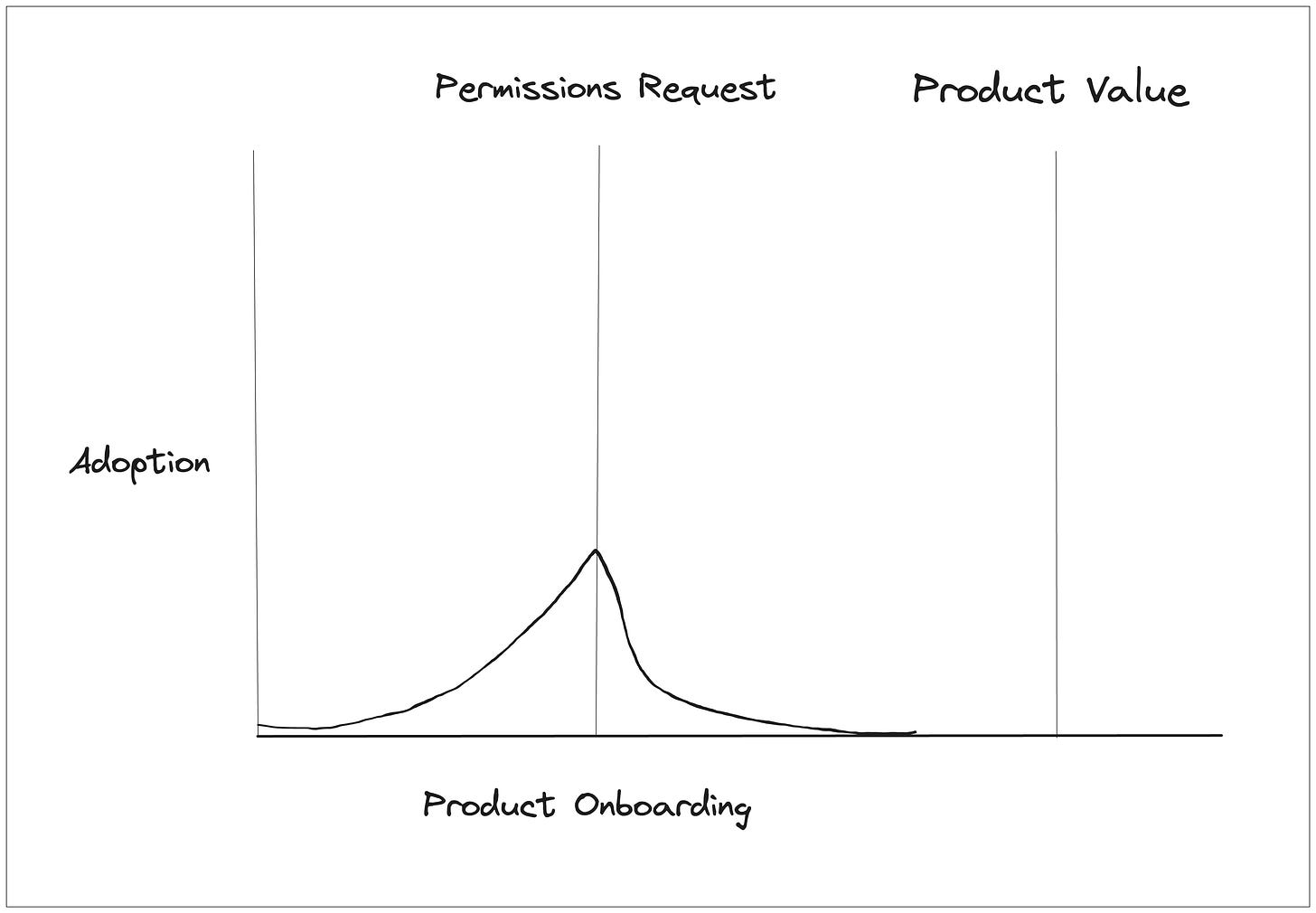

However, we soon found a knot in that strategy. We kept seeing people download our product and then fail to do anything with it. When we asked, we heard that they needed someone on their security team to authorize permissions - our tool required access to source code, which is a sensitive company resource to say the least.

This led us into a classic Catch-22. We needed user feedback to make the product better and to start charging for it, but we couldn’t get feedback because individual engineers didn’t have the time, energy, or authority to go through a lengthy procurement process.

Lesson: PLG doesn’t work well for products that require sensitive integrations.

Sales led growth led us into different pain points

We kept hope and changed strategies. Instead of relying on PLG, we started trying to sell directly to engineering teams. Since individual engineers often don’t have control over security permissions, we tried selling to management. We pitched them on the wins their teams could have if they adopted better code review tooling. Faster release cycles, more productivity, higher code quality.

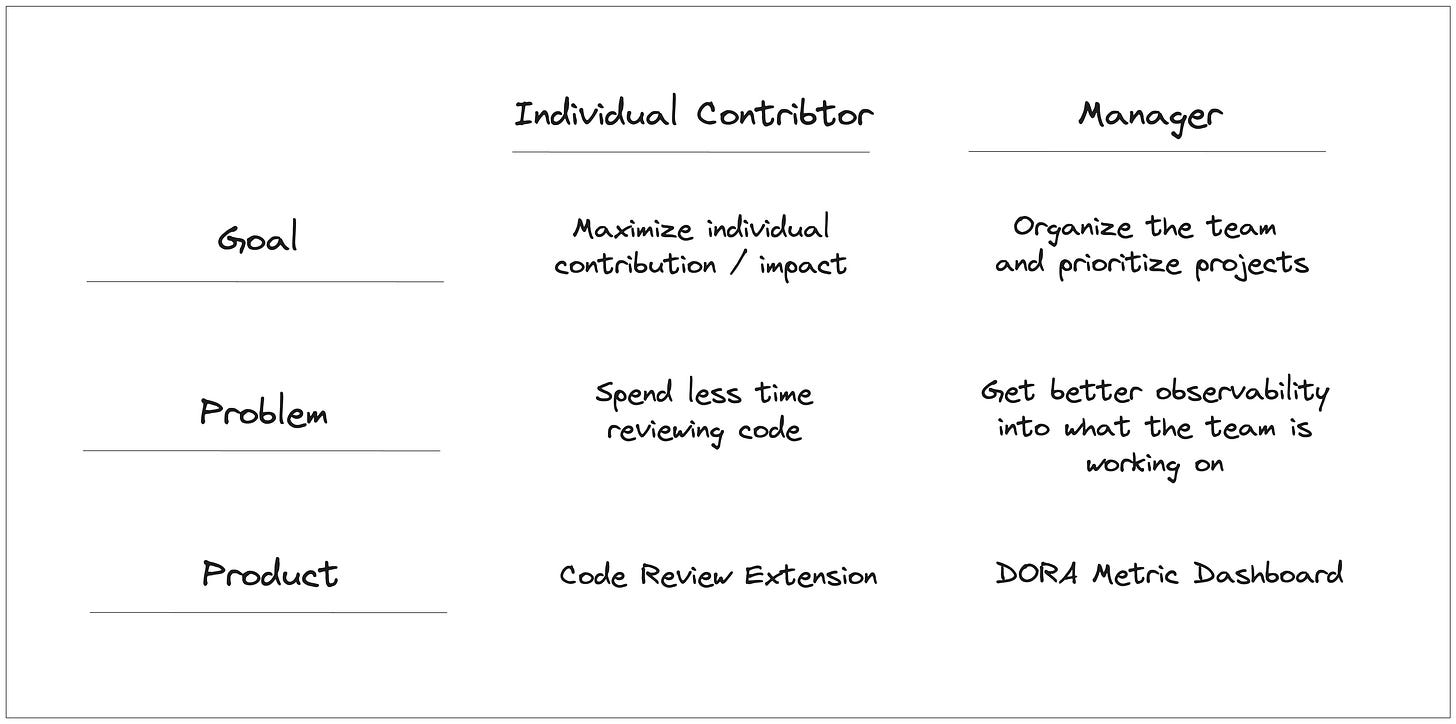

Yet, code review wasn’t really top of mind for engineering management. They claimed that their engineers used whichever tools they wished and that they didn’t really have authority (nor the audacity) to tell an engineer which tools he/she should use.

Instead, they would often ask us to build dashboards to manage DORA metrics. They wanted us to build ways to track their engineers’ productivity rather than tools to help them be more productive. These were clearly different problems than what we were trying to solve, but this made sense given we were now talking to managers who had different incentives and objectives. Unfortunately, it was not something we were particularly interested in building given the plethora of existing tools on the market and the general industry skepticism towards DORA metrics.

Lesson: Even within the same department, different user personas have different goals and therefore want different products.

The problem we were solving was real, but our product couldn’t solve it

Almost every developer we talked to hated doing code review. They consider it to be one of the least desirable parts of their job but something they still must spend hours per week doing.

Unfortunately, our product couldn’t fully automate code review. Or even make it pleasant. It just made it “less bad”. And the unfortunate truth was that even with the internal tool we used in Big Tech, code review was still a pain.

We had several features that we thought made it easier, but none of them were compelling enough for individual engineers to fight for security access or for managers to insist that their teams adopt it.

Our demos fell flat.

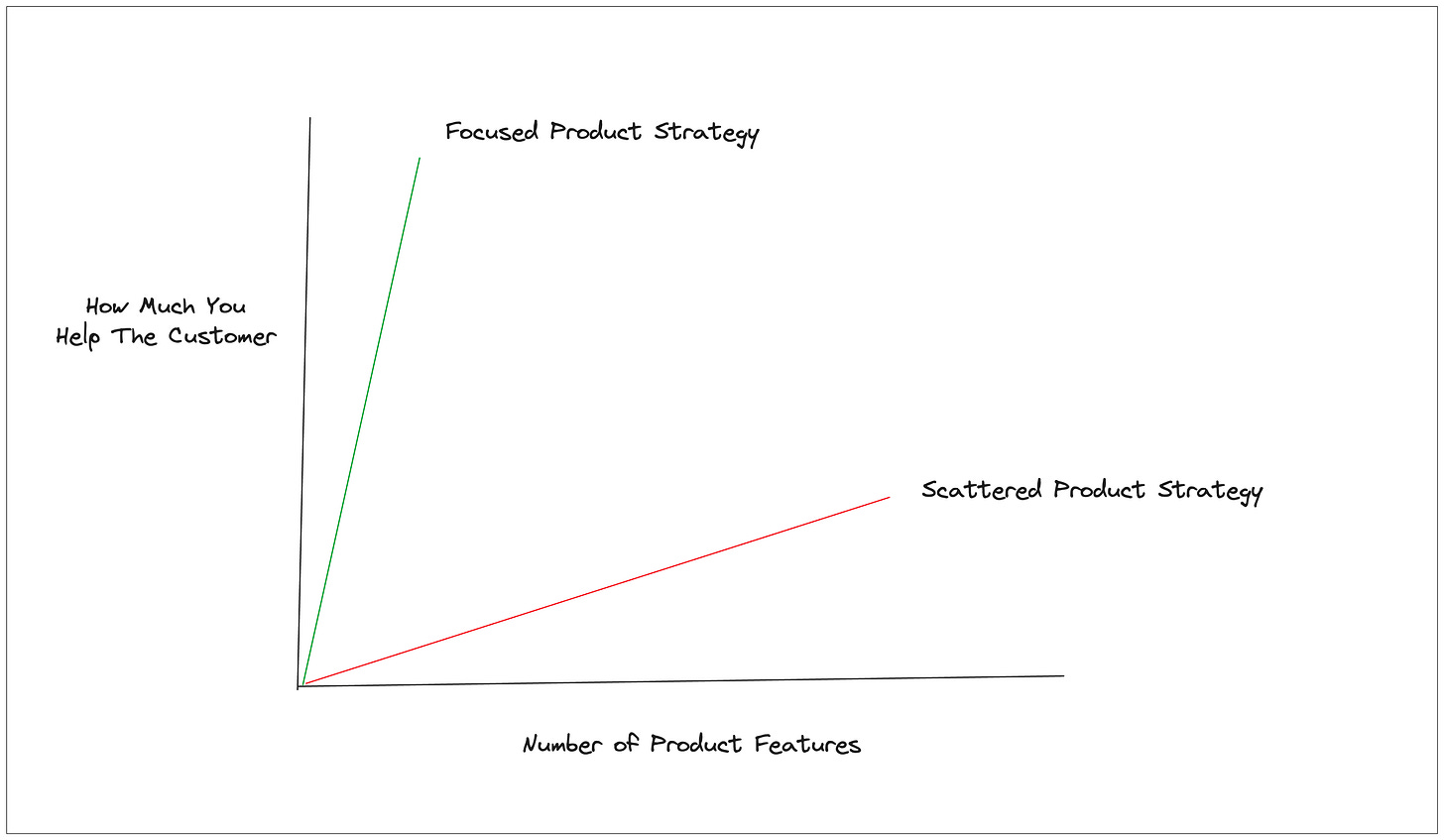

The problem we were trying to solve is certainly a hard one, but we were also scattered in our approach to solving it. When we felt our demos failing, we responded by adding more features instead of doubling down on making our current ones great. This made demos even less compelling. This was a mistake.

In the end, we found ourselves trapped with a messy product and a messy pitch.

Lesson: More features isn’t better, in fact it might be worse

The majority of startups go through a pivot at some point in their journey. For us, the battle scars of working on this project built up to the point where we felt more energized building in a new direction.

It was at this time that a request came up from someone in our network to help them use AI for their scientific research. They had hundreds of papers that they need to read and wanted a tool to help them quickly extract key insights from those documents. This led us down a new rabbit hole and now we’re 8 months into building Epsilon!

I can’t wait to share more learnings from that experience soon!